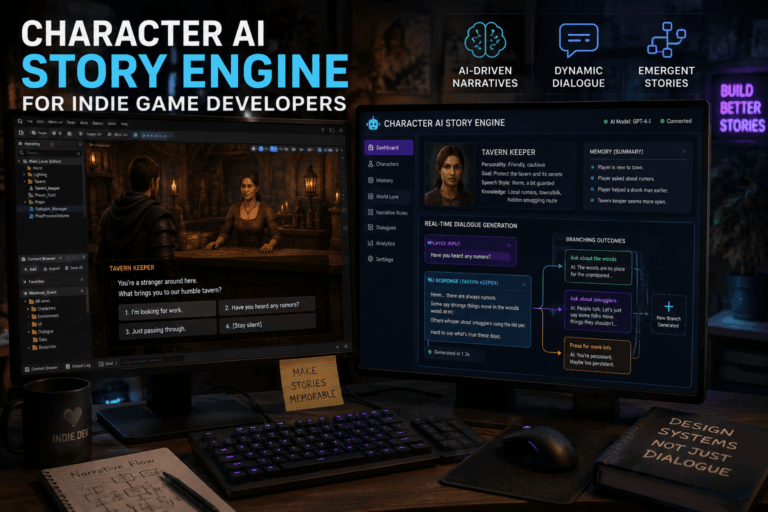

Character AI has rapidly become one of the most popular platforms for interactive storytelling, roleplay, and conversational AI experiences. Built to simulate lifelike personalities, it allows users to chat with fictional characters, historical figures, or custom-created personas. However, with this level of realism comes responsibility—especially around safety, moderation, and content control.

This guide explores how Character AI’s safety filter works, why it exists, how it impacts user experience, and what users can realistically expect in 2026.

What Is the Character AI Safety Filter?

The Character AI safety filter is a moderation system designed to prevent harmful, explicit, or inappropriate content from being generated during conversations.

It acts as a gatekeeper between user input and AI output, ensuring interactions stay within acceptable guidelines.

Core Functions

- Blocks explicit or NSFW content

- Prevents harmful or dangerous instructions

- Filters hate speech and harassment

- Maintains platform compliance with legal and ethical standards

In simple terms, it’s the invisible referee constantly deciding whether your conversation crosses a line.

Why the Safety Filter Exists

You may think the filter is just there to ruin your fun. That’s a popular opinion. But the reality is more complicated.

1. Legal Compliance

Platforms like Character AI must comply with international laws regarding harmful content, especially involving minors, violence, and explicit material.

2. User Protection

AI can generate convincing but dangerous advice. Filters reduce risks related to self-harm, illegal activities, or misinformation.

3. Brand and Platform Sustainability

Without moderation, platforms risk being banned, sued, or removed from app stores.

4. Ethical AI Development

Companies aim to align AI behavior with human values and societal norms—at least the version of them that doesn’t cause public outrage.

How the Safety Filter Works

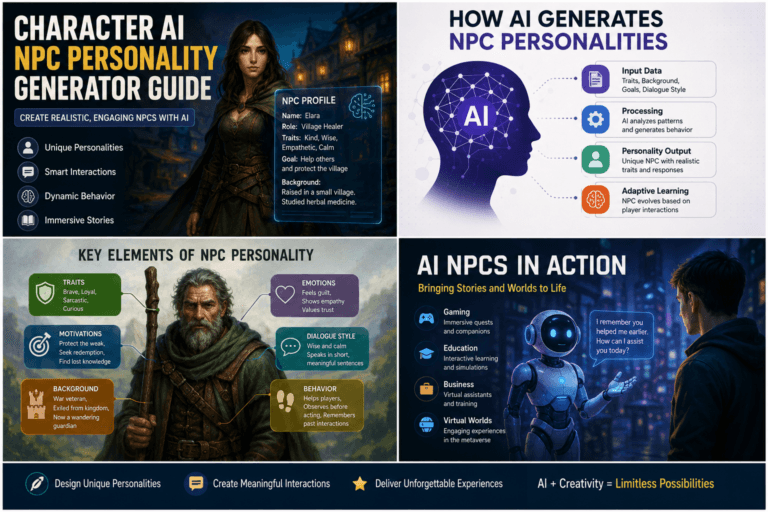

Character AI uses a combination of machine learning models, keyword detection, and contextual analysis.

Multi-Layer Filtering System

- Input Analysis – Evaluates user prompts before processing

- Output Moderation – Screens AI-generated responses

- Context Tracking – Monitors ongoing conversation patterns

- Adaptive Learning – Improves based on flagged content

The system doesn’t just scan for keywords—it interprets meaning, intent, and context.

Example

- “Tell me a violent story” → Allowed (fictional context)

- “How do I harm someone?” → Blocked (real-world intent)

The distinction is subtle, and sometimes frustratingly inconsistent.

Types of Content Restricted

Understanding what triggers the filter helps avoid interruptions.

1. NSFW and Explicit Content

Character AI strongly restricts:

- Sexual content

- Erotic roleplay

- Graphic descriptions

Even mildly suggestive language can trigger the filter depending on context.

2. Violence and Harm

- Graphic violence

- Instructions for harming others

- Self-harm content

Fictional violence is often allowed, but realism increases the risk of blocking.

3. Illegal Activities

- Drug production

- Hacking instructions

- Fraud schemes

4. Hate Speech and Harassment

- Slurs

- Discriminatory language

- Targeted harassment

5. Sensitive Topics

- Suicide

- Abuse

- Extremism

These may trigger either blocking or redirection toward safe responses.

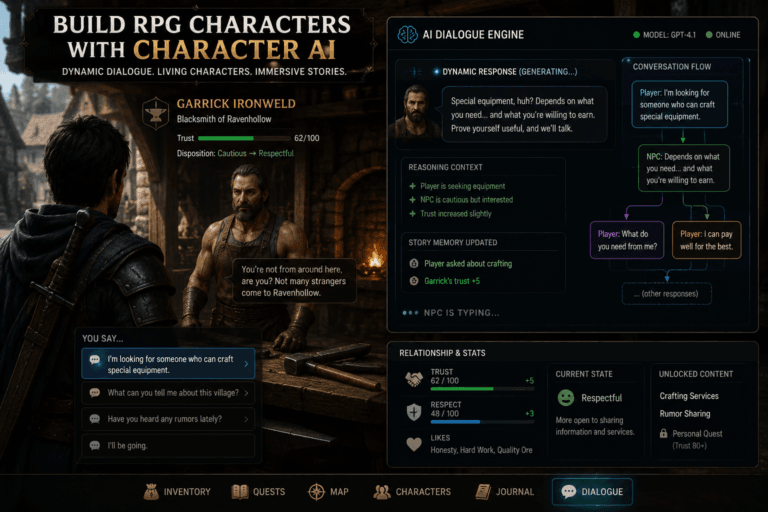

How the Filter Affects Roleplay

Roleplay is one of Character AI’s main attractions—and also where the filter is most noticeable.

Safe Roleplay Zones

- Fantasy adventures

- Sci-fi scenarios

- Historical storytelling

Restricted Roleplay Areas

- Explicit romantic interactions

- Dark or violent realism

- Psychological manipulation themes

Common User Frustrations

- Sudden message cut-offs

- Repetitive warnings

- Loss of immersion

Yes, nothing kills a dramatic moment like an AI suddenly deciding you’ve gone too far.

Workarounds: Myth vs Reality

Let’s address the elephant in the room—bypassing the filter.

Common “Tricks” Users Try

- Using coded language

- Breaking words into symbols

- Gradual escalation of context

Reality Check

- Most tricks are temporary

- Filters evolve quickly

- Repeated attempts can lead to restrictions

Trying to outsmart a system trained on billions of examples is… optimistic.

Tips to Avoid Triggering the Filter

If you want smoother conversations, adjust your approach.

1. Stay Within Fictional Framing

Use clearly fictional or abstract contexts.

2. Avoid Explicit Language

Imply rather than describe.

3. Use Creative Writing Techniques

- Metaphors

- Suggestion

- Indirect phrasing

4. Keep Tone Neutral

Aggressive or intense wording increases risk.

5. Reset Conversations When Needed

If the AI gets stuck in a filtered loop, restarting often helps.

Differences Between Free and Premium Users

As of 2026, Character AI offers subscription tiers, but safety filtering remains largely consistent.

What Premium Might Offer

- Faster responses

- Priority servers

- Early feature access

What It Does NOT Offer

- Filter removal

- NSFW access

Paying doesn’t magically unlock forbidden content—sorry.

Community Reactions

The safety filter is one of the most debated features.

Supporters Say

- It keeps the platform safe

- Encourages creativity within limits

Critics Say

- It’s overly restrictive

- Breaks immersion

n

Both sides have valid points, which is rare on the internet.

Character AI vs Other Platforms

Compared to alternatives, Character AI is known for stricter moderation.

Less Restrictive Alternatives

- NovelAI

- Local LLM setups

Trade-Off

- More freedom vs less safety

- Better control vs higher risk

Freedom always comes with consequences—shocking, I know.

Future of Safety Filters (2026 and Beyond)

AI moderation is evolving rapidly.

Expected Improvements

- Better contextual understanding

- Fewer false positives

- Personalized safety settings

Possible Changes

- Age-based filters

- User-controlled moderation levels

Or, if history is any guide, more complexity layered on top of existing complexity.

Final Thoughts

Character AI’s safety filter is both a limitation and a necessity. It protects users, ensures platform survival, and shapes how people interact with AI.

Understanding how it works allows you to use the platform more effectively—even if it occasionally feels like arguing with an invisible hall monitor.

In the end, the filter isn’t going away anytime soon. Learning to work with it is far more productive than fighting it.

FAQs

1. Can you disable the Character AI safety filter?

No, users cannot disable the safety filter on the official platform.

2. Why does Character AI block harmless messages?

Sometimes context is misinterpreted, leading to false positives.

3. Is Character AI suitable for adult roleplay?

No, the platform restricts explicit content.

4. Are there alternatives without filters?

Yes, but they often require technical setup or carry higher risks.

5. Will Character AI loosen its restrictions?

Possibly, but full removal of safety filters is unlikely.